Prototype realized with support of ART&TECHLAB 2019, Filmakademie Baden-Württemberg

Artificial Intelligence as an Artists Tool

Live performances are often accompanied by visual elements that interact directly with artists on stage. For certain venues, an expressive and personal style is more appreciated than a digital look. However, it is impossible to achieve the expressiveness of a hand-drawn animation within a live context due to the high production effort and time requirements. Pre-produced content, on the other hand, forces the artists to perform in a given time frame and limits their artistic freedom. The project AInim delivers a solution to combine the dynamic synergy between sound and motion obtained by reactive computer graphics and the analog expressiveness of hand-drawn animations. The Ainim prototype was build in collaboration with Irina Rubina. Her animation-short "JazzOrgie" served as the foundation to set up a flexible audio reactive environment that is able to transfer the original artistic intent to a system that allows user interaction and can be entirely controlled by the artist.

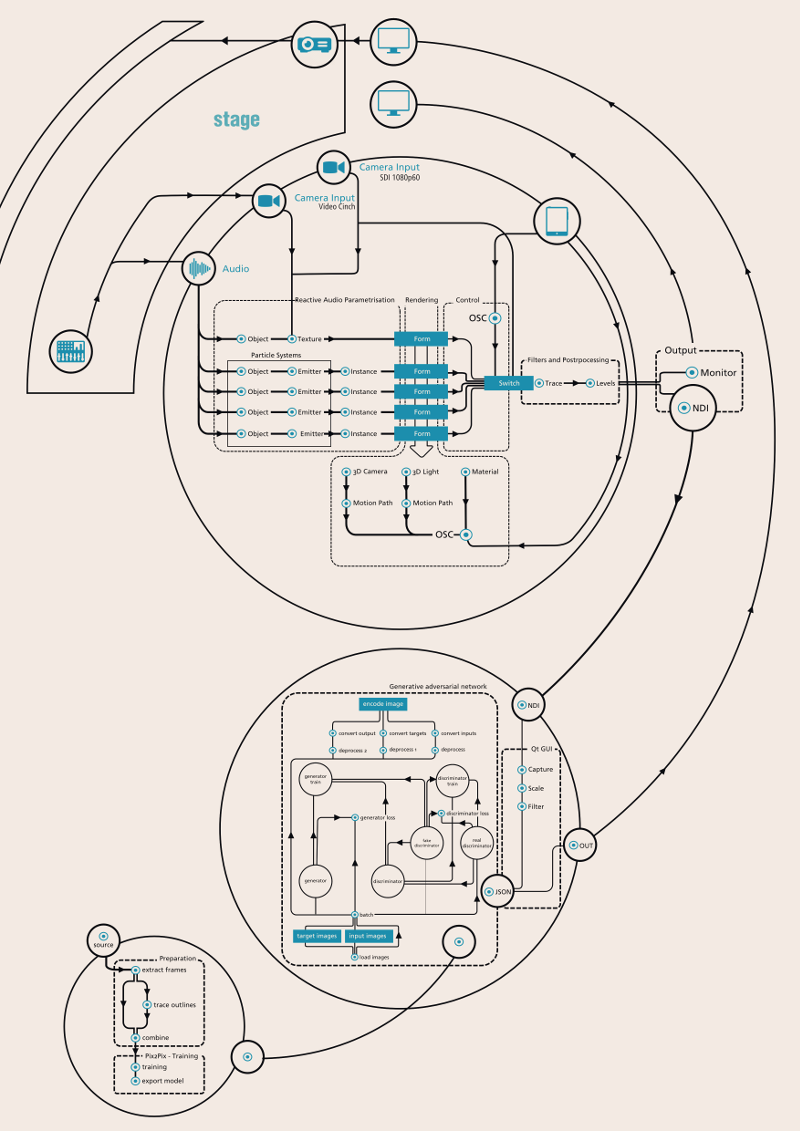

Technical Setup

The project explores the possibilities of so-called Artificial Intelligence (AI) in conjunction with art and artistic processes. The project combines 3Dgenerated content that reacts to sound with an AI setup that transfers the structure of hand-drawn animations onto the 3D input. By combining the fast reaction times and precision of computer-generated graphics with the organic feeling of painted animations, the best qualities of two worlds are united. Within the experimental setup, the artists and creators of the original imagery are in direct exchange with the programming of the configuration and also subject to the theoretical reflection of technology and artistic practice. The AI systems are running a conditional adversarial network for reconstructing objects from edge maps.

About

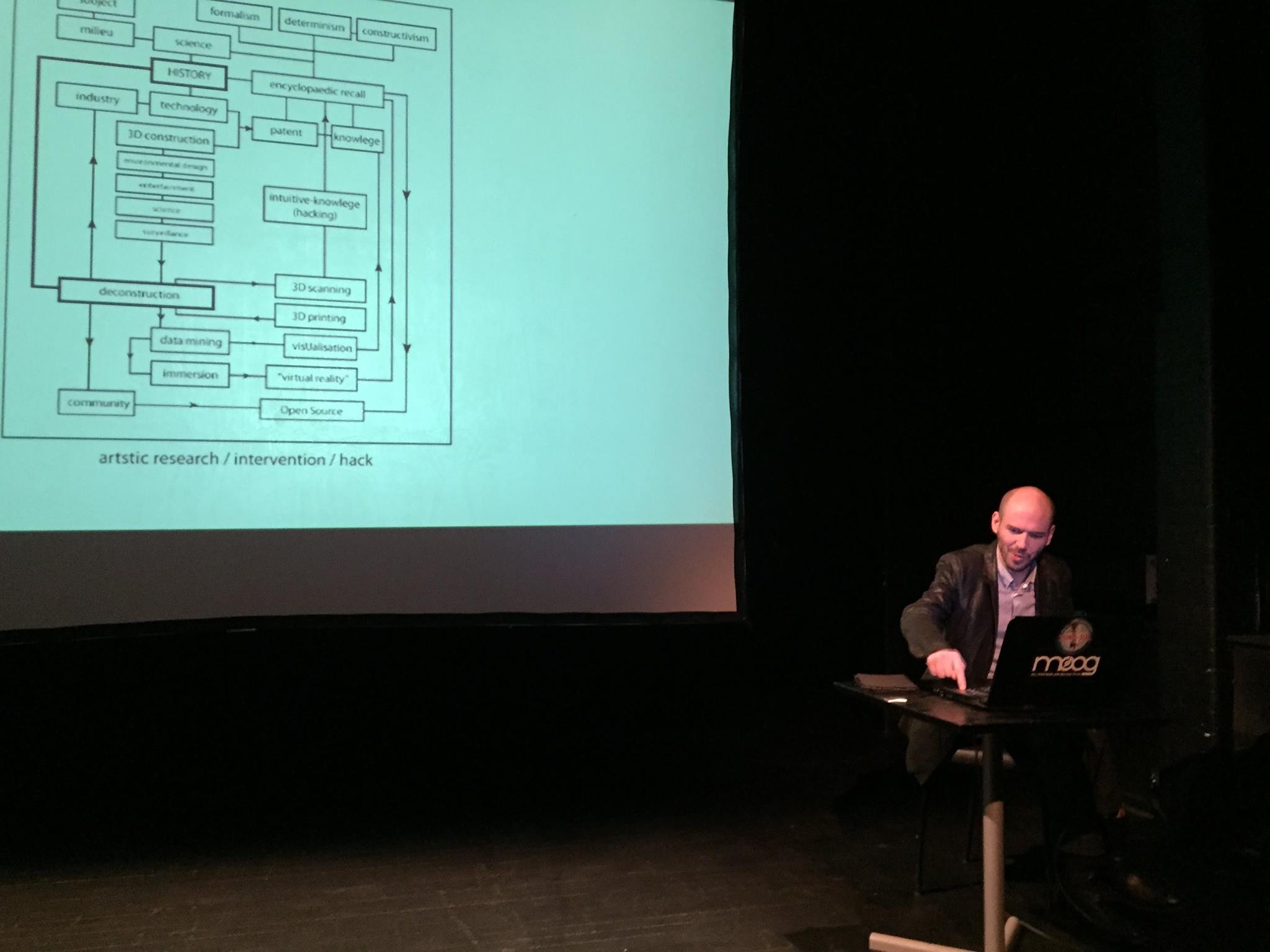

Alexander König is a media scientist, researcher and artist living in Leipzig. He is an employee at the Bauhaus University Weimar and works freelance in the fields of programming, machine learning and media technology. König completed his PhD on the Digital Movement Image in Cultural Theory (Prof. Diedrich Diederichsen) at the University of Fine Arts in Vienna. He is currently researching and teaching in the field of machine learning / artificial intelligence with a focus on critical approaches. The interface between culture, technology and politics is the subject of his research projects and educational concepts. By translating knowledge-centred structural analyses into practical projects, he aims to bridge the existing gap between art and technology and pave the way for unhindered communication and transdisciplinary discourse.

Contact: akoenig (at) media-art-theory.com